How It's Made

One Model to Serve Them All: How Instacart deployed a single Deep Learning pCTR model for multiple surfaces with improved operations and performance along the way

Authors: Cheng Jia, Peng Qi, Joseph Haraldson, Adway Dhillon, Qiao Jiang, Sharath Rao

Introduction

Instacart Ads and Ranking Models

At Instacart Ads, our focus lies in delivering the utmost relevance in advertisements to our customers, facilitating novel product discovery and enhancing their grocery shopping journey. Concurrently, we strive to offer value to our advertisers by bolstering brand recognition, augmenting product sales, and extending their customer reach. Fulfilling these interlinked objectives on this multi-sided marketplace necessitates a strategic approach to ad-serving, particularly in terms of the algorithm governing ad rankings — an aspect that we hold to exceedingly high standards.

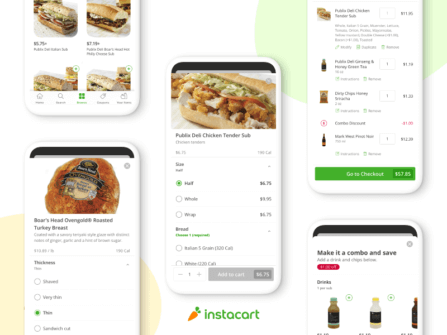

Over the course of our journey, we have created a diverse array of page layouts, each tailored to offer distinct user experiences. Our customers can directly search for specific products, or browse for products on a storefront, mirroring the process of navigating a physical grocery store. The latter — the browsing interfaces — are designed with specific intentions in mind, which include:

- Buy It Again (BIA): showcasing products that a user has previously purchased.

- Frequently Bought With (FBW): suggesting products often bought alongside items already in the users’ carts.

- Store Root: displaying products available in the store currently being viewed.

- Collections: highlighting a collection of products within the same category.

- Item Details: introducing products related to an item under inspection by the customer.

These ad surfaces may appear similar, but the key distinction lies in their contextual differences. For example, Collections feature a specific category name, while Item Details provide information related to an item the user is currently examining, and so on.

Fig-1: examples of Browse surfaces on instacart.com

Legacy XGBoost Browse pCTR Models

In the early stages of developing these surfaces, we designed basic ranking models, using XGBoost to predict ad click-through rates (CTR) and rank ads based on the predictions. As mentioned above, different surfaces tend to have varying contexts and generate unique sets of inputs and features. Consequently, whenever a new context was introduced, the team would create a new model, resulting in a growing collection of models.

Over time, as our range of browsing surfaces expanded, we accumulated a set of XGBoost-based ranking models designed specifically for advertising on different surfaces. As a result, several limitations also emerged with this approach:

- Disparate Training Datasets: The separation in training datasets made cross-sharing of user interaction insights impossible. For example, if a user displayed interest in specific products on one surface by interacting with them, this engagement data would fail to propagate to other surfaces when constructing the training data for the other pCTR models.

- XGBoost Model Limitations: The XGBoost models themselves are constrained in their capacity to incorporate certain types of features, which are required to unify the models for all surfaces. Notably, accommodating high-cardinality categorical features within the model posed a challenge, as one-hot encoding of these features will result in impossibly high dimension of inputs. Consequently, we can’t incorporate collection names for collections, product IDs for item details, or search terms for FBW into the model.

- Maintenance Complexity: Deploying a model for each surface introduces significant operational complexities. The effort managing the service, monitors and incident response for both data pipelines and model serving grows linearly with the number of surfaces. Future changes are further hindered by orchestrating changes with cross-functional stakeholders.

- Isolated Model Infrastructure: The legacy model training and serving platform for the XGBoost pCTR model relies on outdated serving infrastructure, which prevents us from taking advantage of the latest improvements offered by our company’s ML infrastructure stack. As a result, we are unable to benefit from the enhancements implemented by our cross-functional partners.

These challenges represent typical growing pains experienced by rapidly expanding companies. As our focus primarily revolved around product development to enhance customer experience and introduce new advertising platforms, infrastructure development received less attention. Consequently, we lacked the necessary training pipelines to effectively develop a unified model. Moreover, the risk associated with implementing such a unified model was exceedingly high, given that the legacy models were already deployed and serving live traffic.

Unified Browse pCTR Model

Overview

Recognizing these limitations of the legacy Browse model, we embarked on the creation of a Unified Browse pCTR model. This initiative aimed to rectify the aforementioned issues and improve the performance of pCTR models on our browsing surfaces. Our approach is to use a Deep Learning model to unify all surfaces. To achieve this, we consolidated the data generation process by coalescing data from all disparate surfaces, as is shown in Fig-1.

- Unified Dataset: Combining the training data for each surface yields a richer dataset that can train more sophisticated models. Not only is this forward compatible with new surfaces, but this also unlocks powerful cross-surface interactions and user level signals. For illustration purposes, let us consider the following naive example of a user interacting by clicking on a product within the Buy-It-Again (BIA) surface. After combining the data, this information is propagated to other surfaces, leading to an increased predicted probability of a click in those surfaces for this user-product pair.

- Deep Learning Framework: Deep learning frameworks come with several advantages, notably on high cardinality features such as user IDs, product IDs and search texts that capture preferences and intent. These features are incorporated into the model through embedding matrices to achieve dimensionality reduction while still retaining predictive power. Moreover, unlike the legacy XGBoost models, Deep Learning provides opportunities to experiment with novel model architectures and incorporate the state of the art ML techniques from the literature.

Fig-2: Comparison of the training data generation and model architecture of the Unified Browse pCTR model (lower panel) to the legacy Browse pCTR models (upper panel).

Training Data

The training data for the Unified Browse pCTR model encompasses the following distinct (yet not exhaustive) categories of features:

- User Features: This category encompasses features about the user to whom the ads impressions are presented. Through a series of iterations, we have included information such as user ID, user’s historical views, clicks, and purchase patterns, along with supplementary behavioral signals that unveil historical user responses to sales and promotions, their propensity of repurchasing a product, brand loyalty, etc, which have proven to be highly powerful features for prediction.

- Product Features: In this section, we have included attributes pertaining to the product under evaluation. Notably, we consider product ID, name, brand, broad category, and price as significant indicators. Furthermore, we encompass specific product details such as whether the item holds attributes like being alcoholic, kosher, or organic. Additionally, we’ve integrated synthesized attributes into the mix, e.g., scores built on top of deep learning models that reveal more abstract relationships that can’t be fully captured through simple statistics, such as the competitiveness within its category.

- Context Features: These features describe the contextual backdrop against which an ad impression is presented. Some features in this group are present for all contexts, such as types of the placement the ads appear on. But the availability of other features could depend on the given context. For instance, on the item detail surface (Fig-2), the product attributes of the reference product are provided to the model as features when scoring ads on this surface. As another example, on surfaces where we exhibit collections of products within a store, the collection names, such as “fruit juices” or “cleaning products,” are also included as features to the model.

Fig-3: Example of an item detail surface where a focal reference product is situated in the center of the surface, and ads under scoring appear in a carousel below the reference product.

- Mean Target Encoding: These MTE features encode the historical CTRs for specific data segments. They can either apply universally across all contexts, like historical user-product interactions, or exist specifically for certain contexts, such as historical interactions between reference and target product categories on item detail surfaces. These MTE features offer valuable insights into past engagements, serving as potent predictors of future performance. For more details on the MTE features and the streamlined pipeline for their calculation and integration into our training data, stay tuned for a forthcoming post on this topic!

- Data Sparsity and Missing Features: A notable challenge imposed upon working context features is the substantial imputation required within the training data. For simplicity, in our model, we opted for a straightforward approach of imputation with default values. This choice was motivated by the fact that default values in this context symbolize a lack of available data, a concept that the model can learn from. Moreover, considering the sheer magnitude of the data handled by our model, alternate methods such as using an auxiliary model or other statistical signals could prove computationally intractable.

Model Architecture

Fig-4: Diagram of the Wide-and-Deep model architecture with a second order interaction component for the Unified Browse pCTR model.

The architecture of the Unified Browse pCTR model draws strong inspiration from the Wide-and-Deep architecture, which forms the foundation of numerous pCTR models for user response predictions in the industry.

Deep Side

For the deep side, our model heavily relies on three large-scale embedding matrices that are trained specifically for capturing essential information from features such as product id, user id, and various textual attributes (e.g., product name, brand name, search term).

These embedding matrices serve as a crucial component of the Deep Learning feature extraction process in our model. By leveraging these matrices, we can effectively handle the high cardinality associated with users and products, allowing us to capture the nuances and preferences that play a significant role in determining the click-through probability.

Once the embedding vectors are obtained, they are concatenated and fed through multiple fully connected layers. These layers serve as an essential mechanism for approximating the highly non-linear effects of each feature and capturing complex interactions between them. By incorporating this deep side architecture, our model can capture intricate patterns and relationships hidden within the data, leading to more accurate predictions.

Wide Side

On the wide side of our model, we carefully select a range of features to include low cardinality categorical attributes, especially surface type indicators, as well as continuous features. These wide side features, along with the embedding vectors, are concatenated before we introduce pairwise interactions between these features by employing a classical factorization machine. This allows us to capture and adjust coefficients based on the surface types, leading to the ability to model specific distinctions between different surfaces in a more flexible manner.

Second-order interaction

There’s a wealth of literature stressing the importance of explicitly modeling both shallow and higher-order interactions between features in response prediction tasks — take xDeepFM, PNN, and DCN2 as examples. In the first iteration of our model, we decided to incorporate a basic second-order interaction layer via a factorization machine, primarily for its simplicity of implementation and demonstrated efficacy in boosting the model’s performance. In our test, this architecture change resulted in a notable improvement of 1% in both the test log loss and test AUC metrics. To provide some context, in certain experiments, a 1.8% increase in AUC led to a remarkable boost of over 12% in click-through rates on Browse surfaces. Hence, a 1% improvement from the architecture change alone carries substantial impact. This improvement highlights the effectiveness of incorporating these interactions in enhancing the overall performance and capturing additional intricacies in the unified Browse pCTR model.

Model Performance

We performed extensive offline evaluations comparing the new Unified Browse pCTR model to the legacy Browse pCTR model. The new model has shown significantly better performance both offline and online.

Offline

We checked a series of offline test metrics, with the focus on test log loss, test AUC-ROC, test AUC-PR as well as test calibration. We also performed feature importance studies to assess the impact of the features included. The offline metrics are omitted here as we effectively use the same metrics offline and online, and online metrics will be reported in the next section.

Online

Compared to the legacy Browse XGBoost pCTR models, the Unified Browse pCTR model has achieved significant improvements in performance online.

AUC-PR: The Unified Browse pCTR model has achieved a 10% improvement over legacy Browse pCTR models on the BIA surface, 48% on the Store Root surfaces, and a whopping 190% improvement on Collection and other surfaces.

Fig-5: Breakdown of AUC-PR by surface

AUC-ROC: The Unified Browse pCTR model has achieved a 4.8% improvement over legacy Browse pCTR models on the Store Root surfaces, 20% on the Collection surfaces, and 18% on the Other surfaces.

Fig-6: Breakdown of AUC-ROC by surface

The AUC-ROC improvement on the BIA surfaces didn’t see a major lift during the first model rollout due to some discrepancies between online and offline inputs after the model was shipped. However, after resolving this issue in later updates, we significantly boosted performance on that surface, beating the legacy model by 2% on AUC-ROC.

Calibration: The Unified Browse pCTR model has achieved around 64%-77% improvement over legacy Browse XGBoost pCTR models on all surfaces except BIA. It significantly reduced the bias of the model and provided fairer second-price-auction (2PA) pricing for our auction systems.

Fig-7: Breakdown of the calibration by surface

Impacts

A/B Tests showed that the new model substantially improved incremental sales and return on ads spend (ROAS) for the advertisers, and decreased ads end-to-end latency by onboarding to the new model serving platform. Moreover, it significantly increased overall cart adds, demonstrating its ability to increase sales without cannibalizing organic conversions. It was truly a triple win for Instacart, our customers and advertising partners.

What’s more exciting: consolidating the model allowed us to iterate more efficiently. Two weeks after the initial rollout, the team launched a second iteration of the model, which incorporated real-time features from the Ads Marketplace team, along with short-term and long-term user history features including those from user modeling to give the performance yet another boost. This iteration again improved ROAS for the advertisers and increased profit per user.

Combining the two rollout stages led to significant improvements in advertiser incrementality and performance, and substantial gain in cart adds, by raising the quality of ads shown to Instacart customers, while reducing end-to-end latency and operational overhead for Instacart. This achievement testifies to the impact of investment in ML expertise and infrastructure.

Learnings & Conclusions

- Unified modeling: By consolidating data and training a single unified model, we were able to improve user profiling and enhance performance across various browsing surfaces.

- Deep Learning advantages: Leveraging Deep Learning frameworks enabled us to integrate high-cardinality features, incorporating contextual information for all various surfaces. Deep Learning frameworks also capture complex interactions, resulting in more accurate predictions and improved model performance.

- Missing values: Even though different Browse surfaces have unique sets of contexts, Deep Learning models can learn the meanings of missing values. Even without employing complicated imputation techniques, the model’s performance remains unaffected.

- Shallow interactions: We also introduced shallow interactions through factorization machines, which led to a notable 1% improvement in metrics such as log loss and AUC. This demonstrates the effectiveness of this technique in enhancing model performance.

- Iterative improvements: The ability to iterate and optimize the model quickly by incorporating new features and experimenting with different model architectures is crucial for maintaining a streamlined ML workflow and achieving ongoing success in machine learning.

Most Recent in How It's Made

How It's Made

Monte Carlo, Puppetry and Laughter: The Unexpected Joys of Prompt Engineering

Author: Ben Bader The universe of the current Large Language Models (LLMs) engineering is electrifying, to say the least. The industry has been on fire with change since the launch of ChatGPT in November of…...

Dec 19, 2023

How It's Made

Unveiling the Core of Instacart’s Griffin 2.0: A Deep Dive into the Machine Learning Training Platform

Authors: Han Li, Sahil Khanna, Jocelyn De La Rosa, Moping Dou, Sharad Gupta, Chenyang Yu and Rajpal Paryani Background About a year ago, we introduced the first version of Griffin, Instacart’s first ML Platform, detailing its development and support for end-to-end ML in…...

Nov 22, 2023

How It's Made

Introducing Griffin 2.0: Instacart’s Next-Gen ML Platform

Authors: Rajpal Paryani, Han Li, Sahil Khanna, Walter Tuholski Background Griffin is Instacart’s Machine Learning (ML) platform, designed to enhance and standardize the process of developing and deploying ML applications. It significantly accelerated ML adoption at Instacart by tripling…...

Nov 22, 2023

Building Instacart Meals

Building Instacart Meals  Introducing Coil: Kotlin-first Image Loading on Android

Introducing Coil: Kotlin-first Image Loading on Android  7 steps to get started with large-scale labeling

7 steps to get started with large-scale labeling