How It's Made

Introducing Griffin 2.0: Instacart’s Next-Gen ML Platform

Authors: Rajpal Paryani, Han Li, Sahil Khanna, Walter Tuholski

Background

Griffin is Instacart’s Machine Learning (ML) platform, designed to enhance and standardize the process of developing and deploying ML applications. It significantly accelerated ML adoption at Instacart by tripling the number of ML applications within a year. In our earlier post, we explained how a combination of MLOps tools allowed us to manage features efficiently, train models, evaluate performance, and deploy them as real-time services.

Although these tools accelerated ML at Instacart, the command-line and GitHub commit-based interfaces posed a steep learning curve for users. Additionally, they introduced challenges for the ML infrastructure team in terms of code maintenance and lacked a centralized view for managing all ML workloads. In this blog post, we describe how consolidating our tools into a service enabled us to leverage the latest developments in the MLOps field, enhance the user-friendliness of Griffin, simplify operations, and introduce new capabilities like distributed training, LLM fine-tuning, etc. for both current and future ML applications.

A Retrospective Look at Griffin 1.0

When our team started building the first version of Griffin, we placed significant emphasis on designing a system that prioritized extensibility and versatility. To create a seamless experience for all ML applications running on our platform, we introduced a series of tools and interfaces, including:

1. Containerized Environments: We provided project templates to establish containerized environments for all ML applications by leveraging Docker, ensuring consistent build throughout the stages of prototyping, training, and inference.

2. CLI Tools: We developed a comprehensive set of command-line interface (CLI) tools to handle the development-to-production process. This includes:

a. MLCLI: to facilitate the development of containerized environments for feature engineering, training, and serving.

b. ML Launcher: to integrate with multiple third-party ML backends to execute container runs on heterogeneous hardware platforms.

c. Workflow Manager: To schedule and manage ML pipelines.

3. Feature Marketplace: to manage feature computation, versioning, sharing, and discoverability, effectively eliminating offline/online feature drift.

4. Inference Service: We implemented the ability to deploy selected models on Twirp (an RPC framework) and AWS ECS (a managed container orchestration service) to support real-time applications.

Learnings From Griffin 1.0

In Griffin 1.0, we provided a comprehensive suite for end-to-end ML. While the platform facilitated many ML projects at Instacart, it revealed a couple of limitations that prompted the development of Griffin 2.0:

1. Steep Learning Curve:

a. In-house Command-line Tools: Despite extensive documentation and interactive training sessions, MLEs took several days to weeks to become proficient in using the in-house command-line tools.

b. Deployment Process: MLEs needed knowledge of creating AWS ECS (Elastic Container Services) for launching their inference service, introducing unnecessary complexity.

c. System Tuning: Achieving optimal performance required proficiency in tuning system parameters, such as the number of Gunicorn threads, which fell outside the domain knowledge of MLEs and data scientists.

2. Lack of Standardization: Interactions heavily relied on GitHub Pull Requests (PRs), requiring MLEs to customize multiple PRs for tasks like creating a new project, indexing features, setting up Airflow DAGs, and establishing real-time inference services through Terraform. A more standardized approach was lacking.

3. Unoptimized Scalability: The backend infrastructure of Griffin 1.0 was missing the following key capabilities which made it infeasible to further scale. For example:

a. Horizontal Scalability: The training platform of Griffin 1.0 was vertically scalable only, limiting possibilities like distributed training and LLM fine-tuning.

b. Scalable Model Registry: The MLFlow-based backend for the model registry couldn’t handle the required scalability for hundreds to thousands of queries per second effectively.

4. Fragmented User Experience: Integration with various third-party vendor solutions resulted in a fragmented user experience, forcing MLEs to switch between platforms for a comprehensive view of their workloads.

5. Lack of Metadata Management: The client-facing CLI tools initiated feature engineering and training workloads in a ‘fire-and-forget’ manner, making it challenging to retrieve metadata later. Additionally, managing training-serving lineage for a seamless transition of trained models into production was not straightforward.

While we’ve provided a glimpse of these recent advancements in this blog, this article and future pieces will provide a more detailed look into the specific efforts made for each aspect of Griffin.

Introducing: Griffin 2.0

Our Vision

In the Griffin redesign, our objectives are as follows:

- User-Friendly Interface: We’re prioritizing a user-friendly web interface over CLIs and PRs. This interface streamlines every aspect of the ML DevOps, enabling tasks like feature creation, training job setup, workflow monitoring, and real-time ML service deployment with just a few clicks.

- Unified Platform: Griffin 2.0 aims to be a comprehensive platform, consolidating all functionalities into one accessible location. This includes seamless integration with external systems like Datadog, MLFlow, and ECS, all accessible through a unified UI.

- Centralized Data Store: Our focus is on effectively managing the metadata for the entire ML lifecycle. This involves persisting successfully indexed features for future modeling and storing models in the registry after successful training runs.

- New Capabilities: We want our next-gen platform to be infrastructure prepared for emerging capabilities, adapting to the latest advancements in ML, such as distributed computation for supporting distributed training and fine-tuning of Large Language Models (LLMs).

- Improved Scalability: Griffin 2.0 is designed to be highly scalable, exemplified by a highly optimized and scalable serving platform that meets the escalating demand for real-time ML.

Building Blocks

API Service

Griffin 2.0 has transitioned from CLI and GitHub PRs to REST APIs, offering a more intuitive user interface. These APIs enable the creation of features, submission of training jobs, registration of new models, and establishment of inference services.

The Griffin UI leverages these APIs to provide a seamless access point to various backend applications. Additionally, we’ve made many of these APIs accessible through the Griffin SDK. This empowers different clients to automate tasks within Griffin. For instance, our in-house ML notebook cloud-based development environment, BentoML, is a prime example of a client that MLEs can use to submit their training jobs to Griffin.

ML Training Platform

In Griffin 2.0, we leveraged Ray for our ML Training Platform to enable horizontally scaled ML training in a distributed computing environment, which is also highlighted as part of success stories released earlier this year.

We’ve also unified various training backend platforms on Kubernetes to simplify managing multiple third-party backends and create a universal and consistent approach for training jobs.

For faster ML development, we’ve provided users with configuration-based runtimes for TensorFlow and LightGBM (more to come in the future), covering data processing, feature transformation, training, evaluation, and batch inference. This standardizes Python libraries and minimizes conflict resolution complications.

We recently presented our Griffin ML Training Platform story at the Ray Summit 2023. Soon, we’ll be sharing a blog post with more technical details about the ML Training Platform.

ML Serving Platform

In Griffin 2.0, we’ve significantly streamlined and automated the process of deploying models and establishing inference services through the ML Serving Platform. This platform includes key components:

- Model Registry — stores model artifacts.

- Control Plane — facilitates easy deployment of models via the UI.

- Proxy — manages experiments between different model versions.

- Worker — executes tasks like feature retrieval, preprocessing, and model inference.

This architectural setup allows us to fine-tune service resources, enhance latency, scale requests, and reduce maintenance efforts. With these improvements, we’ve drastically reduced the time required to set up an inference service and achieved substantial latency optimization for real-time inference. Stay tuned for a detailed technical blog post on the Serving Platform.

Feature Marketplace

In Griffin 2.0, the Feature Marketplace is a robust platform that facilitates feature computation, ingestion, discoverability, access, and shareability. We’ve introduced a user-friendly UI-based workflow that significantly streamlines the creation of new feature sources and the computation of features. To enhance the quality of generated features, we’ve implemented data validation, enabling us to catch errors in feature generation much earlier in the process. Additionally, we’ve implemented intelligent storage optimization and access patterns to ensure low-latency access to features.

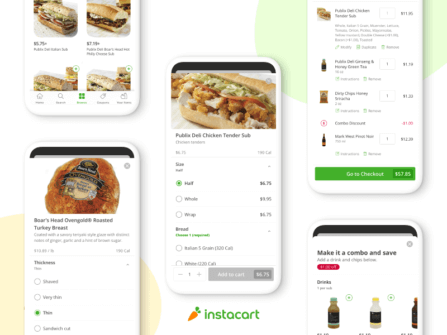

Griffin UI

Last but not least, in Griffin 2.0, we’ve developed a comprehensive UI for MLEs and data scientists to access all the aforementioned systems in one centralized service. Below is an overview of the Griffin UI:

Feature Definition: MLEs start by defining their features in the “Feature Sources” part of the UI. We support both “Batch Feature Sources” that use SQL queries and “Real Time features” that support Flink SQL/Flink Scala Code to create features.

Workflow Management: After creating features, MLEs navigate to the “Workflows” section in the UI to submit a new workflow for training, evaluation, and scoring of their ML Models. Griffin then executes this workflow based on the specified cadence in the definition.

Workflow Details: MLEs can access the workflow detail page for additional information on previous runs. They also have the option to modify any of the details outlined in the workflow definition.

Model Registry: If model training and evaluation are successful, the model is stored in the Griffin Model Registry. The screenshot displays various versions of a “test Griffin model”.

Endpoint Creation: Once a model is available, Griffin users can create a new “endpoint” to host a real-time machine learning service within our service infrastructure.

The screenshots demonstrate how MLEs can seamlessly create an ML Service end-to-end in Griffin with just a few clicks in the UI. Griffin also incorporates validation at different stages, allowing us to identify and rectify errors earlier in the process. This feature aids in cost savings by preventing the execution of actual jobs on our computing infrastructure when errors are detected.

Conclusion

Instacart is dedicated to enhancing user experiences through the application of ML. To achieve this, our ML infrastructure must continually evolve, addressing inefficiencies and introducing new capabilities. Griffin 2.0 represents a significant leap forward in this endeavor.

The development of Griffin 2.0 was a collaborative effort involving several internal teams, including Core Infrastructure, Ads Infrastructure, Data Engineering, and the ML Foundations team. While much progress has been made, there is ongoing work to refine Griffin 2.0. We are actively gathering feedback, making further enhancements, and fostering adoption to realize the vision outlined in this blog.

Since our early days, we’ve witnessed rapid advancements in MLOps. The emergence of technologies like ChatGPT has revolutionized the utilization of Large Language Models (LLMs) across various industries. Our company is at the forefront of these developments. The guiding principles behind Griffin 2.0, including centralized feature and metadata management, distributed computation capabilities, and standardized, optimized serving mechanisms, ensure that our ML infrastructure is well-prepared for advanced applications like LLM training, fine-tuning, and serving in the future. We are committed to adapting and advancing our platform and vision to keep pace with the rapidly evolving world of ML.

Most Recent in How It's Made

How It's Made

One Model to Serve Them All: How Instacart deployed a single Deep Learning pCTR model for multiple surfaces with improved operations and performance along the way

Authors: Cheng Jia, Peng Qi, Joseph Haraldson, Adway Dhillon, Qiao Jiang, Sharath Rao Introduction Instacart Ads and Ranking Models At Instacart Ads, our focus lies in delivering the utmost relevance in advertisements to our customers, facilitating novel product discovery and enhancing…...

Dec 19, 2023

How It's Made

Monte Carlo, Puppetry and Laughter: The Unexpected Joys of Prompt Engineering

Author: Ben Bader The universe of the current Large Language Models (LLMs) engineering is electrifying, to say the least. The industry has been on fire with change since the launch of ChatGPT in November of…...

Dec 19, 2023

How It's Made

Unveiling the Core of Instacart’s Griffin 2.0: A Deep Dive into the Machine Learning Training Platform

Authors: Han Li, Sahil Khanna, Jocelyn De La Rosa, Moping Dou, Sharad Gupta, Chenyang Yu and Rajpal Paryani Background About a year ago, we introduced the first version of Griffin, Instacart’s first ML Platform, detailing its development and support for end-to-end ML in…...

Nov 22, 2023

Building Instacart Meals

Building Instacart Meals  Introducing Coil: Kotlin-first Image Loading on Android

Introducing Coil: Kotlin-first Image Loading on Android  7 steps to get started with large-scale labeling

7 steps to get started with large-scale labeling